OpenInfra Live is a new, weekly hour-long interactive show streaming to the OpenInfra YouTube channel every Thursday at 14:00 UTC (9:00 AM CT). Episodes feature more OpenInfra release updates, user stories, community meetings, and more open infrastructure stories.

Ceph is a highly scalable distributed-storage open source solution offering object, block, and file storage. Join us as various Community members discuss the basics, ongoing development, and integration of OpenStack with Ceph.

Enjoyed this week’s episode and want to hear more about OpenInfra Live? Let us know what other topics or conversations you want to hear from the OpenInfra community this year, and help us to program OpenInfra Live! If you are running OpenStack at scale or helping your customers overcome the challenges discussed in this episode, join the OpenInfra Foundation to help guide OpenStack software development and to support the global community.

The OpenInfra Foundation’s Kendall Nelson, hosted community members from Red Hat, Société Générale, and VEXXHOST. Tom Barron from Red Hat kicked off the episode with “Ceph in 60 Seconds”, an overview of Ceph, followed by an explanation of how it fits in with OpenStack via Cinder and Manilla, as well as Kubernetes.

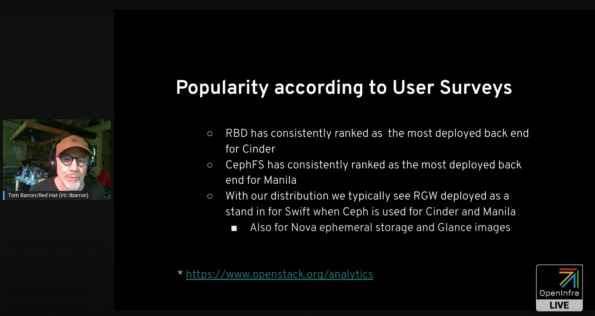

Ceph’s popularity and multi-tenancy situation

Why is Ceph so popular? “There is an economic advantage to deploy Ceph on commodity hardware, and Ceph is able to object, block, and file storage services from the same hardware using the same set of software, rather than having to purchase separate appliances, potentially from separate vendors for each service, or having to train operators to have separate skill sets to manage the storage technologies behind them,” Barron said. Improvements such as having a common dashboard are making it easier to manage Ceph.

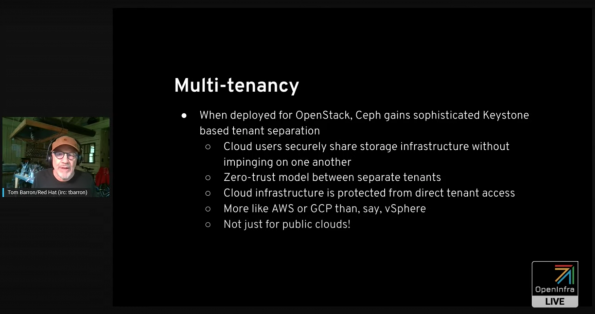

What does the multi-tenancy situation look like with Ceph and OpenStack? Barron explained that the multi-tenant separation with Ceph has the following capabilities:

- Cloud users securely share storage infrastructure without impinging on one another

- Zero-trust model between separate tenants

- Cloud infrastructure is protected from direct tenant access like AWS or GCP than a virtualization platform

- And it’s NOT just for public clouds!

Barron emphasized that Ceph is an open source project, which means that you can avoid vendor lock-in and avoid paying a third party to run something that you can’t inspect inside of yourself. There is a vibrant Ceph community, whose users can always participate in the project conversation directly with others and influence new feature development.

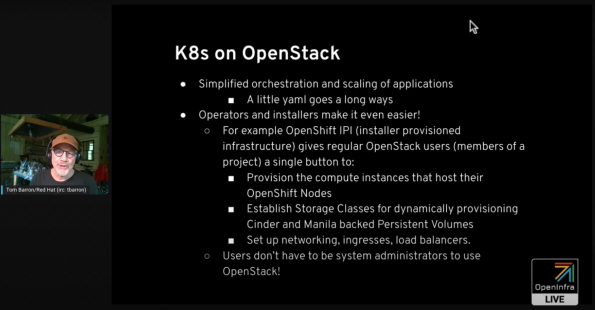

K8s on OpenStack with Ceph

Barron kicked off this section by debunking the popular yet false belief that “Kubernetes and OpenStack are opposed to each other.” Red Hat’s team strongly believes that “Kubernetes and OpenStack are complementary rather than exclusive.”

What Kubernetes brings to the table is simplified orchestration and scaling of applications. For example, OpenShift IPI (Installer Provisioned Infrastructure) gives regular OpenStack users a single button to provision all their OpenStack compute instances for their OpenShift nodes. It builds the Storage Classes they need to do dynamic provisioning of Storage Persistent Volumes with Cinder and Manila, and it sets up the networking, ingresses, load balancers, and etc.

In a word, users don’t have to be system administrators to run applications on top of OpenStack if they are running them on top of Kubernetes on top of OpenStack.

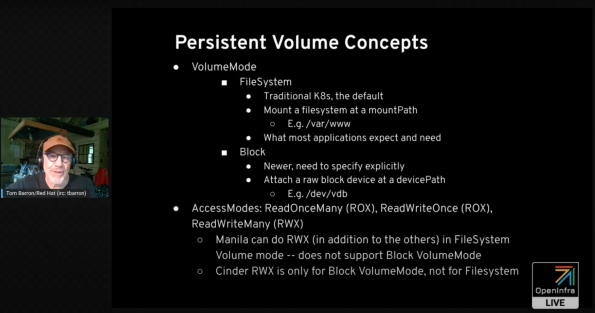

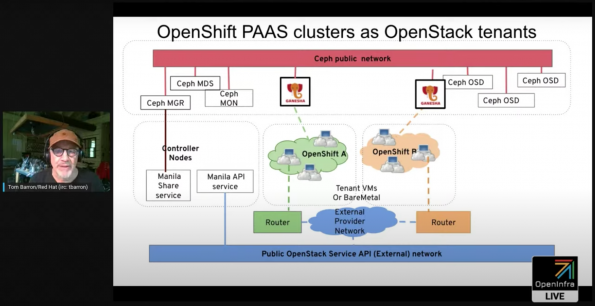

Volumes are crucial to run Kubernetes. Barron further explains two persistent Volume concepts, which are VolumeMode and AccessMode. In the slide below, you will be able to see two OpenShift clusters with Manila running on top of OpenStack. It shows the Ceph public network, sharing by Ganesha to provide NFS service to these separate OpenShift clusters, which belong to regular users. Replacing OpenShift with Kubernetes in this structure, the diagram shows hundreds of clusters running on Kubernetes clusters that run on a single OpenStack cluster.

Traditionally, Kubernetes worked with FireSystem volume mode, to give you a persistent volume at a mountPath as opposed to a devicePath. Nowadays, you can run raw Block VolumeMode.

Barron provided an image (featured above) of two OpenShift clusters with Manila running on top of OpenStack to demonstrate a Ceph public network sharing via Ganesha to provide NFS service to separate OpenShift clusters that belong to regular users. There can be hundreds of these clusters on the OpenStack cloud all unrelated. This use case is interchangeable with Kubernetes clusters.

People worry that because of all the service layers involved, it would not work at container scale, mentions Barron. But what’s meant is that it is easy to orchestrate the provisioning of persistent volumes of pods and containers of Kubernetes across the cloud, that you can get rapid busts of creation needs. Barron goes on to mention that he has been studying this, and so fast has seen flat, consistent growth.

Kendall Nelson goes on to ask Barron how you can connect with the communities?

TripleO Integration with Ceph

John Fulton from Red Hat followed Barron’s presentation with their one presentation on TripleO’s integration with Ceph.

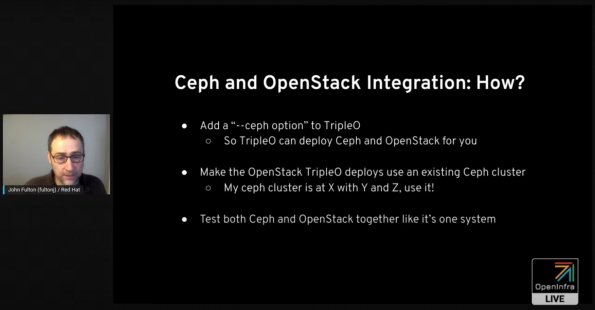

Fulton kicks off the presentation by reinforcing the claims Barron previously made around the high interest the open source community has in Ceph and OpenStack. Because of the overall community interest, Fulton wants to make sure these features are easily accessible with TripleO.

Fulton goes on to provide information around an overview of a TripleO deployment, Ceph deployment tool changes, and usability enhancements.

The Deployed Ceph feature allows for more isolation so that you can deploy Ceph before you deploy OpenStack. The idea behind Deployed Ceph is strategic decoupling. Check out the Do’s and Don’ts of Deployed Ceph.

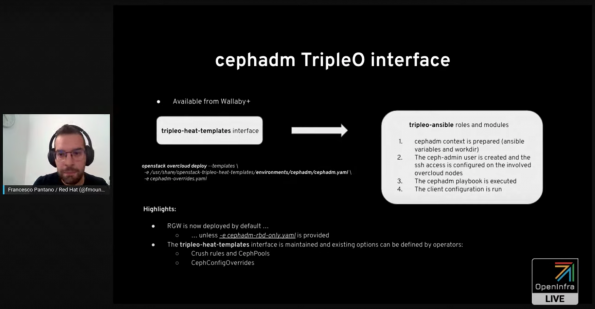

Fulton then handed it off to Francesco Pantano from Red Hat the interface of the cephadm tool, a tool that bootstraps a tiny Ceph cluster for you so you only need to scale.

Pantano goes on to discuss TLS-everywhere and high Availability and skip level upgrades. What’s nest in TripleO Ceph Integration? Pantano says the upgrade process is one of the biggest next steps.

Fulton and Pantano were asked:

- Are there plans for continued improvements to Ceph and TripleO integration with the upcoming release?

- How do you divide resources between Ceph and OpenStack in hyper-convergence?

Société Générale

Société Générale is one of Europe’s leading financial services groups. The over 150-year-old company supports 30 million clients in over 61 countries every day.

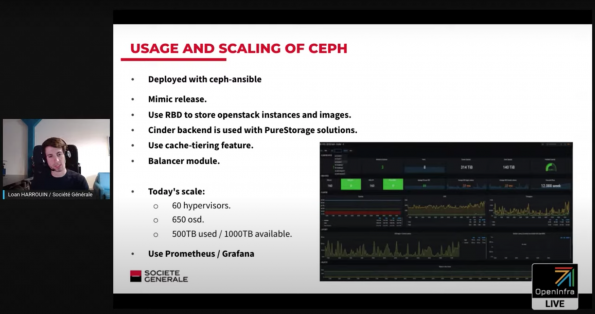

Société Générale firsted deployed OpenStack in 2018 on the Queens release in France with 20 hypervisors. Today they are running the Ussuri release with 350 hypervisors. They have 60 hypervisors running worldwide that are running Ceph on the mimc release with about 500TB used and 1,000 TB available and try to always have free space.

Société Générale is now planning to:

- Upgrade Ceph to the Pacific release

- Get rid of cache tiering because it hides a lot of complexity

- Deprecation of the hyperconverged model on the biggest region.

- Containerized Ceph on Docker host

VEXXHOST

Mohammed Naser, CEO at VEXXHOST, OpenInfra Foundation board member, and OpenStack-helm contributor joined OpenInfra Live to share their story with Ceph and OpenStack. VEXXHOST is running several of its large clusters worldwide on Octopus with a couple of Pacific deployments. Staying up to date in Ceph is really important, the stability of Ceph provides for low-risk upgrades, says Naser.

VEXXHOST’s OpenStack control plane for all their deployments runs in Kubernetes, so VEXXHOST uses the Ceph RBD CSI to provision RBD volumes that are attached as Ceph persistent volumes to run their OpenStack Control plane for things like Rabbit, Qstate, and MariaDB storage. They also use RBD for Vms for block storage. For Cinder, when a volume is created in one of their public clouds or private clouds that are deployed for customers, that is a Ceph volume.

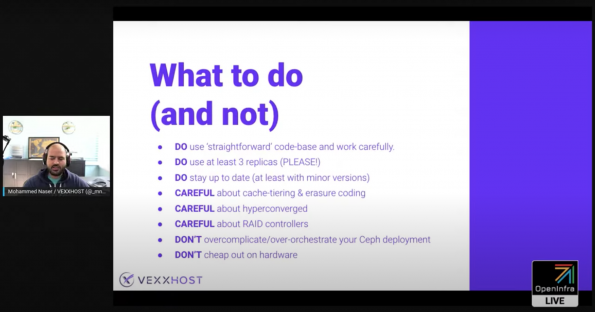

With several years of Ceph deployment, Naser offered some advice on what operators should do (and not do!) with their Ceph deployments.

Next Episode on #OpenInfraLive

Come learn more about OpenStack Xena, the 24th release of the open source cloud computing project. Hundreds of developers from over 120 organizations delivered OpenStack Xena and this episode of OpenInfra Live will highlight some of the key features, what use cases are impacted, and what operators can expect.

Tune in on Thursday, October 7 at 1400 UTC (9:00 AM CT) to watch this #OpenInfraLive episode: OpenStack Xena: Open Source Integration and Hardware Diversity.

You can watch this episode live on YouTube, LinkedIn and Facebook. The recording of OpenInfra Live will be posted on OpenStack WeChat after each live stream!

Like the show? Join the community!

Catch up on the previous OpenInfra Live episodes on the OpenInfra Foundation YouTube channel, and subscribe for the Foundation email communication to hear more OpenInfra updates!

)