Today if you want to run a hybrid cloud with OpenStack VMs and Kubernetes container workloads, you will most likely end up with either:

- Kubernetes running on top of OpenStack (e.g. Magnum, Murano or Heat K8s cloud provider)

- Kubernetes running on bare metal beside OpenStack

- A combination of the two, where some container workloads run on bare metal and some on VMs for added isolation.

These approaches present new problems in terms of networking setup, for example:

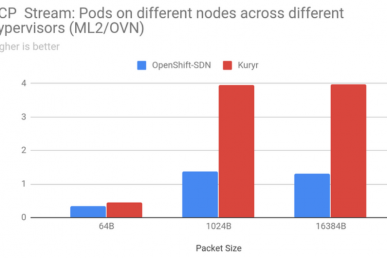

- Kubernetes expects you to use NodePort, LoadBalancer or ExternalName type services to access Pods from OpenStack VMs (i.e. outside of the Kubernetes cluster). In most cases, that would lead to reduced VM-to-Pod data-plane performance compared to Pod-to-Pod or VM-to-VM cases.

- If you are using a networking solution that has native support for both OpenStack and Kubernetes you could enable L3 connectivity between OpenStack and Kubernetes networks, but it would require manual configuration which could become quite complicated if you also need to adhere to rules of the OpenStack security group.

- Double-tunneling will have negative impact on data-plane performance (e.g. Kubernetes ‘flannel’ tunnel encapsulated in OpenStack ‘vxlan’ tunnel when running Kubernetes on top of OpenStack).

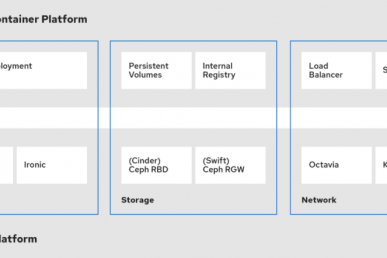

The OpenStack Kuryr project solves these problems by enabling native Neutron-based networking in Kubernetes. With Kuryr-Kubernetes it’s now possible to choose to run both OpenStack VMs and Kubernetes Pods on the same Neutron network if your workloads require it or to use different segments and, for example, route between them.

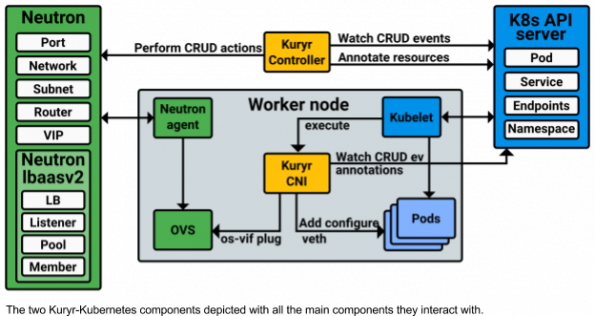

Kuryr’s Kubernetes integration has two main components:

- Kuryr Controller: This daemon runs usually on the same nodes where you run the Kubernetes API. The duty of the controller is to monitor the Kubernetes API resource changes and perform allocation and resource management of Neutron resources. The resource management operations end by annotating the Kubernetes resource that requires them with information about the Neutron resource it got.

- Kuryr CNI Driver: A Container Networking Interface compliant driver that is installed on each of the Kubernetes worker nodes that binds the Kuryr Controller allocated Ports to Pods.

We’ve prepared a short preview demo that shows how Kuryr can be used to enable network connectivity between OpenStack VMs and Kubernetes Pods/services.

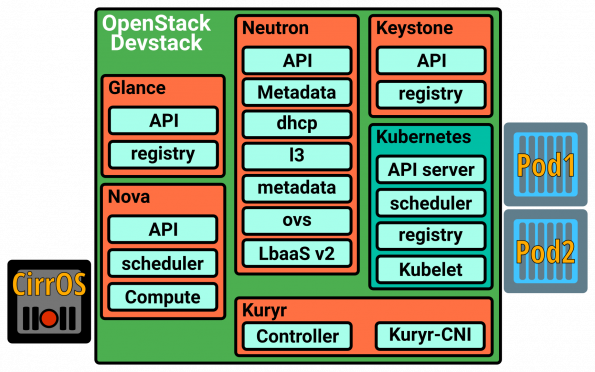

The services and workloads used in the demo are deployed in an all-in-one configuration using OpenStack Devstack.

For more information about Kuryr Kubernetes project internals, please check the spec and devref documentation, contact the Kuryr team on #openstack-kuryr freenode IRC channel or use the OpenStack development mailing list using the tags [openstack-dev][kuryr] in the subject line.

Superuser is always interested in community content, please email [email protected] to contribute. Original posts can help you gain Active User Contributor status.

// CC BY

- Enable native Neutron-based networking in Kubernetes with Kuryr - January 24, 2017

)