Lew Tucker is CTO of cloud computing at Cisco, and has been involved with the OpenStack community since the very beginning.

At the outset of his talk at the Paris Summit 2 weeks ago, Lew said a curious thing that made us sit up and take notice.

He said that speaking at the Summit provides opportunities to “…think about something different, and for that reason, mine is not going to be a technical talk. This is much more going to be a talk about this notion of a world of many clouds.”

Tucker continued: “We’ve seen cloud computing explode across the IT industry and everywhere else. One of the things I want to explore as OpenStack gets more and more successful is, what does the world look like when there’s not just three or four public cloud providers running OpenStack, but there are hundreds, and hundreds and hundreds in different countries?”

“What does that mean when we have, actually, a multifaceted world of many simultaneous OpenStack clouds that are more than the sum of their parts?”

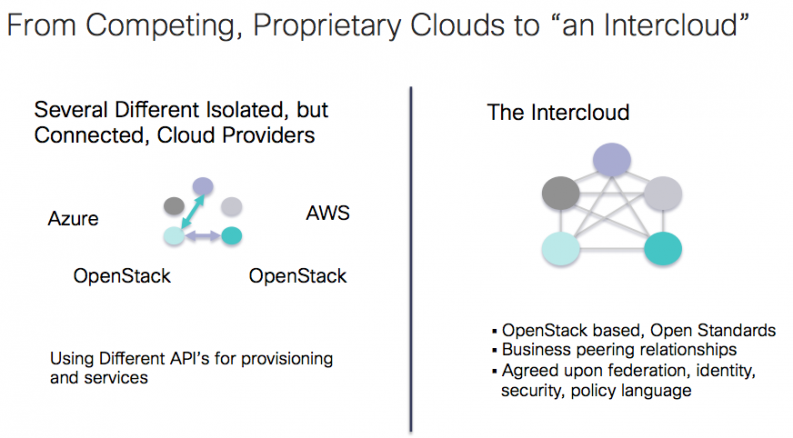

Tucker called this idea an Intercloud — the notion of moving from the isolated clouds of today, to an interoperating, if heterogenous, network of compute not unlike the Internet itself. For Tucker, the recent Juno release is a harbinger of such things to come.

Further, the Intercloud shouldn’t be a happy accident down the road, or a nicety. The Intercloud should be, in his opinion, something that we actively work to make happen, an explicit goal, even despite some of the near-term deterrents.

“We should all be really, really proud,” he said, careful to start with recent successes. But, Tucker continued, the real question is: “where is OpenStack going next?

“I know on the Foundation and on the technical committee, we’re having a lot of these discussions. Can we keep OpenStack as one thing while it covers a lot of these different use cases, these different markets? I believe we can,” said Tucker.

But he warned that designing for the future is paramount; a long-term view is essential. So, how does the kind of intraoperation that Tucker described (and that Cisco conceivably wants to see) come to pass?

Here’s a recap of the talk and its most important points. If you want to watch Lew’s full presentation, the video is embedded at the bottom of this post.

Winning the enterprise is key

If we can’t win the enterprise, the future is bleak. Even though “we may be saying this is all about new cloud applications, AKA cloud native applications,” Tucker emphasized the unavoidable truth that “IT shops control most of the spend,” and as such they have a disproportionate influence on how the future actually comes to pass, despite whatever our best intentions may be otherwise.

We have to be able to bring a cloud into these IT shops, and teach them how to “move those applications onto a cloud platform in a way they find satisfying."

Cloud computing is absolutely winning in the strategic sense, and it’s the only real growth area right now in IT; the mandate is clear, Tucker argued. But the implication, of course, is that we’ve not yet won IT; it’s easy to say that we have, or will soon.

But the intellectually honest position is that the battle for the enterprise is still nascent. The reality of the move to the cloud for IT that Tucker described is more non-trivial than most acknowledge publicly, and this reporter, among others, appreciated his candor.

We have to bring up the rear, so to speak; an Intercloud isn’t possible when so many IT operations are off pace. And that’s not a critique of them, Tucker suggested. It is rather a challenge for us, for our community, and the platforms and services we provide.

We cannot expect the enterprise to come to us; “win” isn’t the right word. It’s just as possible we’ll lose, if we’re not emphatic, and humble, and again, in service to our users, wherever they may be.

Don’t forget that OpenStack feels very different if you’re a user of OpenStack

“Openstack is not so nice at times," Tucker said, abruptly. “OpenStack feels very different if you’re a user of OpenStack.”

Think about that.

“There’s a lot of us who are developing OpenStack,” he said. And it’s too easy to forget to walk a mile or two in our users’ shoes. “We’ve also been kicking off these Operator Summits, which are very, very important. These are people who are trying to use OpenStack.”

We’re really trying to bring the operators together, get them closer to the whole community here, and interoperating these groups is an essential precursor to any “many clouds” vision, technologically speaking.

As was a theme elsewhere at the Summit, cultural norms and community development are not just a surrogate for technological prowess; they are in fact a driver of it.

"What we’re now arriving at," Tucker said, “is the process that we want. This is the process I think that will help us move forward. You create a working group, you gather use cases, you expose the problems, the things we need to do, and you submit blueprints so that other people can start working on those things. And this is how we come together as a community.”

Continue building-out next-generation networking services

Tucker fully expects to see a couple of new generations of network services in quick succession, and he thinks that’s a very good thing for the Intercloud vision.

“The simple ones now,” he said, “by and large are simply making a virtual machine form factor for particular networking functions running on an X86 box. And everyone likes it and says, ‘Oh, that’ll drive down the cost of it and everything else.’”

But as the argument goes, that’s not going to be sufficient.

The next generation will bring truly distributed systems, Tucker argued. And that’s where it gets interesting.

For those of you coming out of a background of distributed systems, this is going to be a fascinating place to watch, because if you’re going to have to “support millions of NAT endpoints, you’re going to need a cluster that has to be able to share some state and has to be able to horizontally scale.”

Of course, that’s not the way such boxes were designed originally, but they do have networking protocols that allow you to do what Tucker describes, today, and he clearly thinks that this will be a very fertile area of innovation over the next couple of years.

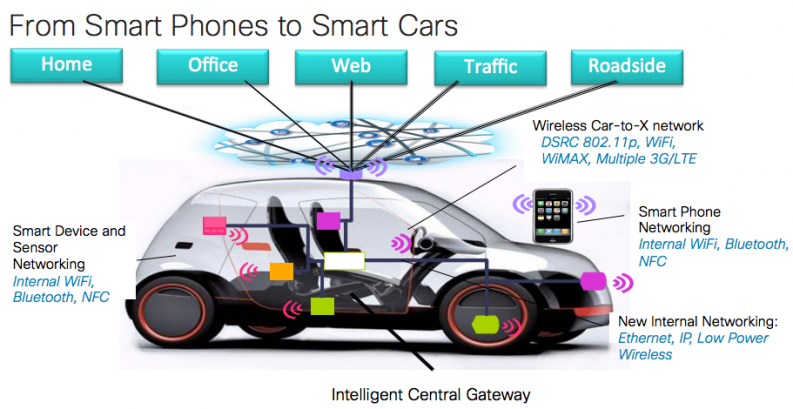

Taking OpenStack outside of the data center

Tucker emphasized how many new (and how many different) devices we have coming online.

So far we’ve focused on the infrastructural core of OpenStack, and rightfully so. We’ve been “primarily worried about how we get OpenStack to work inside of a data center.”

But, Tucker argued, now that we are connecting mobile devices, handheld devices, sensors, and more, we know that there’s an even larger swarm of these devices going out into the rest of the world, year after year. We’re just getting started in that sense.

“Its interesting. We’ve got some people here from Cisco who’ve been working on using OpenStack to manage all of these different kinds of devices, and that I think is another interesting play."

“We are also going to see OpenStack in cars” on a long enough timeline. They are, after all, just “network-based systems,” Tucker noted.

In fact, Tucker argued, what we’re seeing is that more and more management systems are moving into the cloud — cars are just one of a myriad of new endpoints that need upstream infrastructure.

“Many of you may know that we acquired a company named Meraki, which manages WiFi access points,” said Tucker. “And that’s now being done from a cloud and people love that. The cloud becomes an easier place to manage things that are spread out all over.”

Can OpenStack eventually serve as that kind of connected device fabric, outside of the data center?

Creating standards and protocols

Last but not least, for Intercloud to be a reality, lets be honest, “it’s a lot of business negotiations,” Tucker said.

Take the example Tucker gave of the way that telephone systems work — there are a lot of serious and complex business relationships behind the infrastructure that we today take for granted, making it so that many simultaneous parties can, among other things, carry each other’s traffic.

With cloud computing, “it’s not just networking, but it’s also compute, it’s storage, it’s customers, identity management, and more that we need to be shared. We’re going to need probably some Intercloud protocols for that. That’s how distributive systems are able to work. They have to be able to exchange information, talking to each other in a common language to do these things between these clouds.”

Tucker was emphatic that he doesn’t yet know what those protocols are, and rather wants us to start thinking about them so that the entire community can begin designing them into our systems.

Such protocols would, among other things, make new kinds of service marketplaces and exchanges possible so that companies can develop applications and put them into an app exchange or something similar, which would in turn be easily consumed by any variety of different clouds.

“We really need things such as federation, not to mention policy, which likewise becomes really important because right now a lot of the ways we enforce policy is through networking that is very, very specific to either a vendor solution or a particular data center.”

The central question is, as Tucker drove home, “can we do this?”

Or as he later put it: “Can we have this kind of Intercloud based on this kind of community-driven model instead of waiting on large service providers to come out with this global cloud that we always dreamed of? I’m betting on the community.”

Emphasizing multi-vendor clouds

“If the Intercloud vision matures, happens, it will be multi-vendor.”

One of the things Tucker hears all the time as he is talking to customers about OpenStack, is that they don’t want vendor lock-in.

And Tucker agrees. “I want the customer getting a product from me because it’s the best product. I want to compete on the implementation. Customers should be going to the cloud that meets their requirements. I expect to see clouds that are OpenStack clouds catering to different markets; catering to a high performance computing market; catering to biology or agriculture, or healthcare, where special abilities to comply with different HIPAA rules around their data matter supremely. OpenStack has lots of room for competition, and that’s a good thing.”

But despite that (encouraged) heterogeny, Tucker clearly insists that having a common model means you can have a larger market in which to play overall. That, for him, is the essential notion of OpenStack, and a way to have our cake and eat it too.

Conclusion

“So what, really, what is our future here?” Tucker asked the audience.

“We’re clearly on a trajectory to win in this marketplace. We’re seeing almost all the major IT vendors are now involved. And so what happens when we have lots and lots and lots of these OpenStack clouds out there?”

“Should we start thinking interoperation, and a ‘many clouds’ vision, so that we can shape the blueprints and everything else we’re going for with this kind of cloud-to-cloud interoperability? Yes.”

Tucker quickly turned back the clock to look back at how this same process (a network of networks, so to speak) happened for the Internet.

He said: “I’m not sure how many in the audience remember the Internet when it was this size. I actually do, it was in 1977, and it’s okay if only a few of us remember! We could send mail. It was wonderful. We could share files. It was wonderful. But we had no idea that that was going to become this…what we think of as the Internet today.”

Fortunately, a couple of major, critical decisions were made early on that allowed that kind of growth. It wasn’t completely as a straight line as anybody has been involved in has known, but we were “able to get to this kind of scale. Now the rest of the world looks at this and says, ‘That’s the Internet.’ It wasn’t always that way.”

Before of course, there used to be lots of different networks, and that you would move from one network to another network, each with different protocols involved.

“IP-based systems, TC/IP standards — in the end, we arrived at protocols so that now even my 20-year-old daughter knows what HTTP is. At least she knows that’s the thing you’ve got to put in the front of something if you’re going to get to Facebook or something!”

This wasn’t done by one company, Tucker emphasized.

“Here were these different companies — these autonomous systems that are all of a sudden connected. Fierce competitors were suddenly exchanging packets, allowing other traffic to flow through their networks, all so that we could create the Internet.”

You had AT&T, working with Level 3, working with Verizon, Google, Trident Telecom, Cisco and, hundreds of other companies as well, through these different kinds of distributed computing protocols that allowed us to truly interoperate, sending packets around to the world.

Of course, mistakes can happen. Famously, at one point, two-thirds of the Internet’s YouTube traffic went through Pakistan and Pakistan was blocking it, so it went into a black hole. There were even 18 minutes that China Telecom essentially hijacked all of the traffic from Verizon, Tucker claimed. Both of these were believed to be human error, not malevolent action.

“The Internet is not without problems,” Tucker said, “but we’ve come a long way. We’re able to have and to use the Internet. I hear problems about OpenStack every day and I keep saying: ‘It’s okay. It’s okay.’ We will always have problems, but we keep moving forward and making progress, and winning people over.”

The crowd nodded in agreement.

“Is there a way for us to start thinking about OpenStack as becoming a cloud of clouds?” Tucker asked. “Well, could the Internet have been built by one company? I really don’t think so. It’s not likely one company could have done it.”

And so, will OpenStack be the community that builds this Intercloud?

Tucker’s answer: “I think that’s really up to us. That’s why I wanted to bring up this topic now so that we can start to think about that as we go forward and designing the next set of features for OpenStack, mindful of these very issues.”

To learn more, see Lew Tucker’s entire talk at the OpenStack Summit in Paris.

- Demystifying Confidential Containers with a Live Kata Containers Demo - July 13, 2023

- OpenInfra Summit Vancouver Recap: 50 things You Need to Know - June 16, 2023

- Congratulations to the 2023 Superuser Awards Winner: Bloomberg - June 13, 2023

)