Welcome to the PTL overview series, where we will highlight each of the projects and the upcoming features that will be in the next OpenStack release: Kilo. These updates are posted on the OpenStack Foundation YouTube channel, and each PTL is available for questions on IRC.

Nikhil Manchanda is the PTL for Trove, the database-as-a-service project of OpenStack. He spoke recently on Trove’s current status and its upcoming improvements scheduled for the Kilo release cycle.

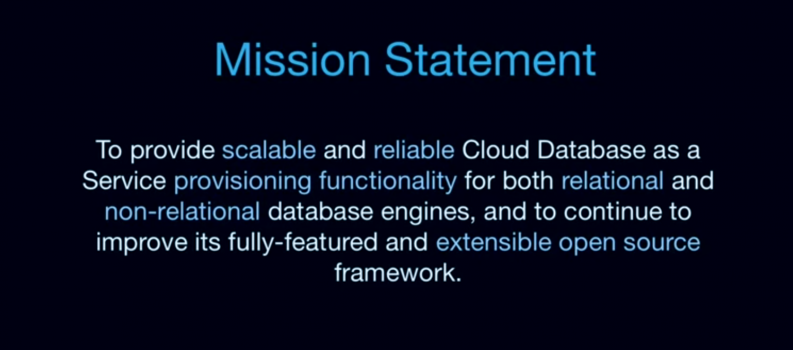

Trove’s mission

Nikhil started off his talk by surfacing the Trove mission statement, explaining that Trove is a set of OpenStack services that allow you to make API calls to the cloud, let you provision different datastores of different types, and then allow you to manage those instances throughout their lifecycle.

Nikhil emphasized that Trove specifically does provisioning. “It does not get into the business of data plane," he said.

He also pointed out that a key element of Trove is its ability to support both relational (like mySQL and PostgreSQL) and non-relational database engines (like MongoDB, Cassandra, and Couchbase).

Trove, built on top of other OpenStack projects, is completely extensive and open source.

Juno Overview

Nikhil said that Trove saw many milestones in the Juno cycle, not least of which was a rapidly growing community. Here is what Trove looked like by the numbers during the Juno release cycle:

- 332 commits from 71 contributors

- 31 blueprints implemented

- 201 bugs fixed

- ~3500 code reviews

- 66,168 total lines of code changed

Neutron support

Before Juno, Trove only supported working on Nova networking. The project has now added support for Neutron.

Nikhil also said that the team has made the relevant Horizon enhancements so that if Neutron were enabled and you were trying to create a Trove instance, you’ll have the option to choose which network you want to be on or which ports you want to be attached to.

Replication

When you create your a new Trove instance, you can specify that another existing Trove instance should be set up as a “master,” and your new instance should be set up as its “slave."

Once you make that API call, Trove will take care of setting up the replication account and the replication between the master and the slave.

The team also made sure there was ability to detach the slave from the master and cut off the replication if the master was down, or if a user wanted to promote the slave to its own master.

According to Nikhil, this is an ongoing process that will be made more stable in Kilo.

Clustering

Based on feedback Nikhil and others heard from users and operators during Icehouse, Trove added the ability to spin up a MongoDB cluster. That allows for high-availability through the replica sets and also allows it to grow horizontally by adding new shards the the cluster.

Configuration Groups Enhancements

Trove also added default configuration templates on a per datastore and version basis. That means that users can now add different templates if they are deploying a different version of your datastore. This is useful if a user wants to deploy both MySQL 5.5 and MySQL 5.6 in his or her environment, because your configuration template has to be different. Trove now supports that.

Users can also do configuration groups which allows them to override default configurations in templates through the API.

Nikhil said that the team also tightened up the validation for the values for those configuration parameters. They’re now backed by a schema which tells you what the min- or max-value is for each parameter. That adds an element of basic error-checking so users aren’t shooting themselves in the foot by entering a value that doesn’t fit for that parameter.

DataStore Improvements

The other noteworthy improvements in Juno were the additions of initial support for PostgreSQL and backup and restore for Couchbase. This is an ongoing effort that the team will continue to work throughout the Kilo release cycle.

Looking Ahead to Kilo

Nikhil laid out quite a few improvements for Trove, some of which are continuations of efforts that started in Icehouse or Juno and others that are new for this release.

Specs

Nikhil explained that like other OpenStack projects, Trove will be moving back towards the old specs process. In Juno, Trove did its blueprints in Wikipages, but had problems with reviews because there was not a good mechanism for annotation and users could not easily track changes. It was also difficult to get feedback from operators and users.

"We found that a lot of that feedback was happening through email and mechanisms outside of the Wikipage, so everyone who was using the Wikipage didn’t have the full context."

To fix those problems in the future, Trove will move back to doing specs using gerrit. Read more information on the Blueprints wiki page.

More Datastore Improvements

In addition to incremental improvements for existing datastores, Trove will also introduce the initial implementations of new datastores, including CouchDB and Vertica.

Nikhil also said that the team wants to add an API that is able to fetch datastore specific logs from instances. This would be useful for when users run queries on those datastores. If something goes wrong, they need a programmatic way to figure out the problem. In most scenarios, they don’t have actual access to the instances, so the only way to figure it out is through the API.

The idea here is that users could get certain content from those datastore logs (with operator permission) like the MySQL error log or the slow query log.

Buildling on Replication

In Juno, Trove allowed users to create a new instance as a replica and then detach the replica from its source, but this could only be done through Trove clients. In Kilo, the team plans to close the loop and add Horizon support for replication.

The Trove community also plans to improve replication based on GTID and add support for GTID-based replication for MySQL.

Trove will also plan to provide support for failover in the next release. The team is looking to see how they can leverage information from Trove Heartbeat to failover from a master instance to a slave instance, or give users and operators the tools so that they can set it up themselves, based on certain configurable timeouts.

Building out clusters

Trove has already added the clustering API and it supports MongoDB. For Kilo, the team will be focused on adding clustering support for other datastores including Cassandra, Vertica, and XtraDBCluster (Galera).

Paying off Technical Debt

Trove also plans to address a number of housekeeping items in the upcoming release that will make it more stable and easier to use.

First on that list is removing the 3rd party external "Deprecated Trove CI so that all functional/int-tests run in a devstack-vm-gate environment."

Another technical debt the Trove community will pay off is in cleaning out deprecated Oslo-incubator code that exists in the codebase and moving towards the graduated Oslo module. This is ongoing work; Nikhil said that the Trove project will be vigilant in keeping on top of the changes in Oslo.

Finally, Trove will also add support for upgrade testing using Grenade. Grenade is an OpenStack tool that lets you run devstack for a previous release, upgrade to a new release in devstack and then make sure the resources that were provisioned in the previous version still work in the newer versions.

Simplifying Ops

One of the biggest and most consistent pieces of feedback that Nikhil heard during the Kilo Summit was that it’s too difficult to build Guest Images today. Trove is working on making that easier in this release cycle.

Today, there is no easy way to do that — the only option is to use the redstack scripts, which are old and convoluted. Trove wants to introduce a standalone way to build the Trove Guest images without breaking into redstack.

Nikhil also mentioned wanting to to improve Documentation, not just for image building, but for getting started with and deploying Trove.

Get involved

The Trove community is growing rapidly and is always looking for new members and new ideas. If you’re interested in getting involved, get in touch with Nikhil or head to the Trove IRC:

IRC and Freenode: #openstack-trove

Email: [email protected]

To see the full Trove update, be sure to check out the full webinar here:

- Musings and Predictions from Superuser’s Editorial Advisors - January 29, 2015

- Kilo Update: Trove - January 9, 2015

- Kilo Update: Ceilometer - December 19, 2014

)