Workday’s OpenStack journey has been a fast one: from no cloud to five distributed data centers, over a thousand servers and 50,000 cores in four years.

The team has done much of the work themselves. At the Sydney Summit, Edgar Magana and Imtiaz Chowdhury, both of Workday, gave a talk about how they work with Kubernetes to achieve zero downtime for large-scale production software-as-a-service.

“We’re very happy about what we’ve achieved with OpenStack,” says Magana, who has been involved with OpenStack since 2011 and currently chairs the User Committee. (You can read more about OpenStack at Workday in this Superuser story.)

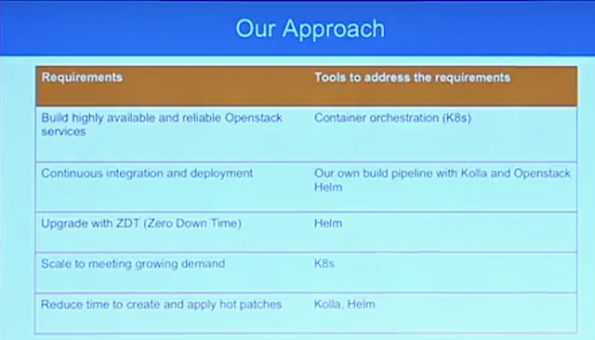

As deploying Openstack services on containers becomes more popular and simpler, operators are jumping on the container bandwagon. However, although many open source and paid solutions are available, few offer the options to customize OpenStack deployment to meet requirements for security, business and ops.

“Everything we’ve done so far, it’s fully automated and we do it in a way that developers can make changes and deploy it all the way to production after getting it tested,” says Chowdhury. “We also want to make sure we can upgrade and maintain with zero downtime.”

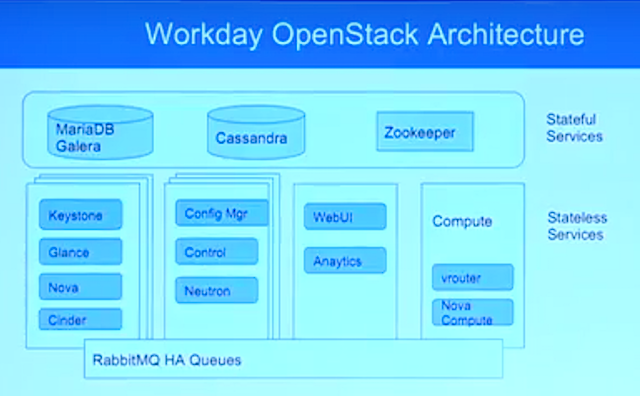

Magana gave an overview of the current architecture at Workday, noting that “We’re not doing anything crazy, we have a typical reference architecture for OpenStack, but we have made a few changes.”

Where you’d normally have the OpenStack controller with all the OpenStack projects (Keystone, Nova, etc.) Workday decided to abstract out the stateful services and build out what they call an “infrat server.”

Coming at it from an ops perspective, they go into of toperationalizing production-grade deployment of Openstack on Kubernetes in a data center using community solutions.

They cover:

- How to build a continuous integration and deployment pipeline for creating container images using Kolla

- How to harden OpenStack service configuration with Openstack-Helm to meet enterprise security, logging and monitoring requirements

In this 40-minute talk, the pair share also lessons learned (“good, the bad and the ugly”) and best practices for deploying Openstack on Kubernetes running on bare metal.

- Demystifying Confidential Containers with a Live Kata Containers Demo - July 13, 2023

- OpenInfra Summit Vancouver Recap: 50 things You Need to Know - June 16, 2023

- Congratulations to the 2023 Superuser Awards Winner: Bloomberg - June 13, 2023

)