Over the past two years, the software defined infrastructure (SDI) team at Linaro has worked to successfully deliver a cloud running on Armv8-A AArch64 (Arm64) hardware that’s interoperable with any OpenStack cloud.

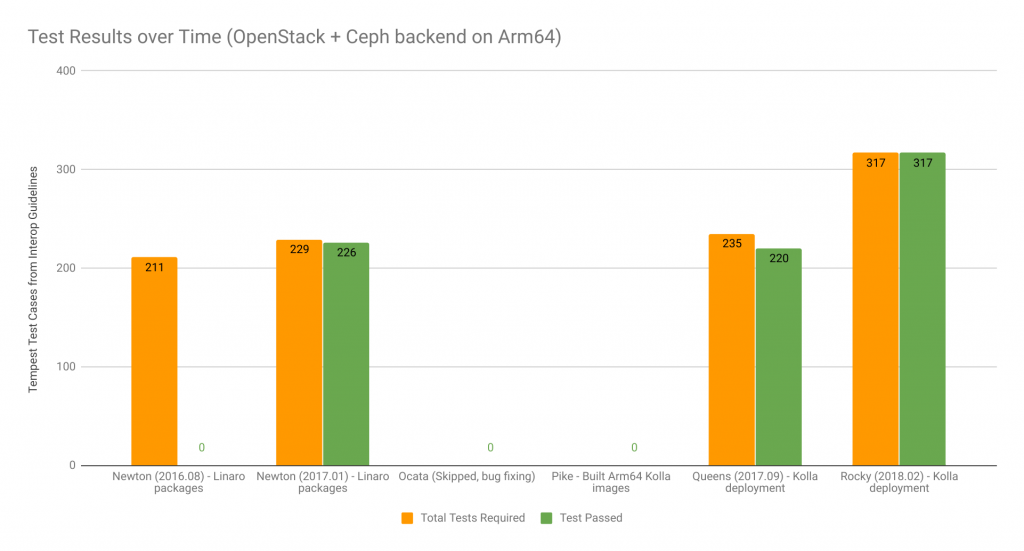

To measure interoperability with other OpenStack clouds, we use the OpenStack interop guidelines as a benchmark. Since 2016, we’ve run tests against different OpenStack releases – Newton, Queens and most recently Rocky. With Rocky, OpenStack on Arm64 hardware passed 100 percent of the tests in the 2018.02 guidelines, with enough projects enabled that Linaro’s deployment with Kolla and kolla-ansible are now compliant. This is a big achievement. Linaro is now able to offer a cloud that is, from a user perspective, fully interoperable while running on Arm64 hardware.

So what have we done so far towards making Arm a first-class citizen in OpenStack?

The Linaro Reference Architecture: A simple OpenStack multi-node deployment

We started with a handful of Arm64 servers, installed Debian/CentOS on them and tried to use distro packages to create a very basic OpenStack multi node deployment. At the time, Mitaka was the release and we didn’t get very far. We kept finding and fixing bugs, trying to contribute them upstream and to Linaro’s setup simultaneously, with all the backporting problems that entails. In the end, we decided to build our own packages from OpenStack master (which would later become Newton) and start testing/fixing issues as we found them.

The deployment was a very simple three-node control plane, N node compute plus Ceph for storage of virtual machines and volumes. It was called the Linaro Reference Architecture to ensure all Linaro engineers conducting testing remotely generated comparable results and were able to accurately reproduce failures.

The Linaro Developer Cloud generates data centers

In 2016, Arm hardware was very scarce and challenging to manage (with a culture of boards sitting on engineer’s desks). The team therefore built three data centers (in the United States, China and the United Kingdom) so that Linaro member engineers would find it easier to share hardware and automate workloads.

During Newton, Linaro cabled servers, installed operating systems (almost by hand from a local PXE server) and tried to install/run OpenStack master with Ansible scripts that we wrote to install our packages. A cloud was installed in the UK with this rudimentary tooling and a few months later we were at the OpenStack Summit Barcelona, demoing it during the OpenStack Interoperability Challenge 2016.

These were early days for Linaro’s cloud offering and the workload was very simple (LAMP), but it spun five VMs and ran on a multi-node Newton Arm64 cloud with a Ceph backend — successfully and fully automated without any architecture specific changes. Linaro’s clouds are built on hardware donated by Linaro members and capacity from the clouds is contributed to specific open-source projects for Arm64 enablement under the umbrella of the Linaro Developer Cloud.

After that Interoperability Challenge, a team of fewer than five people spent significant time working on the Newton release, fixing bugs on the entire stack (kernel, qemu, libvirt, OpenStack) and keeping up with new OpenStack features. For every new release we used, the interop bar was raised: we were testing against a moving target, the interop guidelines and OpenStack itself.

Going upstream: Kolla

During Pike we decided to move to containers with Kolla, rather than building our own. Working with an upstream project meant our containers would be consumable by others and they would be production ready from the start. With this objective in mind, we joined the Kolla team and started building Arm64 containers alongside the ones already being built. Our goal was to fix the scripts to be multi architecture aware and ensure we could build as many containers as necessary to run a production cloud. Kolla builds a lot of containers that we don’t really use or test on our basic setup, so we only enabled a subset of them. We agreed with the Kolla team that our containers would be Debian based, so we added Debian support back into Kolla, which was at risk of being deprecated because no one was responsible for maintaining it at the time.

Queens was the first release that we could install with kolla-ansible. Rocky is the first one that’s truly interoperable with other OpenStack deployments. For comparison during Pike, we didn’t have object storage support due to a lack of manpower to test it. This support was added during Queens and enabled as part of the services of the UK Developer Cloud.

Once Linaro had working containers and working kolla-ansible playbooks to deploy them, we started migrating the Linaro Developer Cloud away from the Newton self-built package and into a Kolla-based deployment.

Being part of upstream Kolla efforts also meant committing to test the code we wrote. This is something we have started doing, but there’s still more ground to cover. As a first step, Linaro has contributed availability in its China cloud to OpenStack-Infra and the infra team were most helpful bringing up all their tooling on Arm64. This cloud has connectivity challenges when talking to the rest of the OpenStack Foundation infrastructure that needs resolution. In the meantime, Linaro has given OpenStack-Infra access to the UK cloud.

The UK Linaro Developer Cloud has been upgraded to Rocky before ever going into production with Queens. This means it will be Linaro’s first available zone that is fully interoperable with other OpenStack clouds. The other zones will be upgraded shortly to a Kolla-based Rocky deployment.

There are a few changes we’d like to highlight that were necessary to enable Arm64 OpenStack clouds:

• Ensuring images boot in UEFI mode

• Being able to delete instances with NVRAM

• Adding virtio-mmio support

• Adding graphical console

• Making the number of PCIe ports configurable

• Enabling Swift

• Updating documentation

We’ve also been contributing other changes that were not necessarily architecture related but related to the day-to-day operation of the Linaro Developer Cloud. For example, we’ve added some monitoring changes to Kolla to improve the ability to deploy monitorization. For the Rocky cycle, Linaro is the fifth contributor to the Kolla project according to Stackalytics data.

What’s next?

Once the Linaro Developer Cloud is fully functional on OpenStack-Infra, we’ll be able to add gate jobs for the Arm64 images and deployments to the Kolla gates. This is currently work in progress. The agreement with Infra is that any project that wants to have a go at running on Arm64 can also run tests on the Linaro Developer Cloud, if desired. This enables anyone in the OpenStack community to run tests on Arm64. Linaro is still working on adding enough capacity to make this a reality during peak testing times, currently experimental runs can be added to get ready for it.

Conclusion

It’s been an interesting journey, particularly when asked by engineers if we were running OpenStack on Raspberry Pi! Our response has always been: “We run OpenStack on real servers with IPMI, multiple hard drives, reasonable amounts of memory and Arm64 CPUs!”

We’re actively using servers with processors from Cavium, HiSilicon, Qualcomm and others. We’ve also found and fixed bugs in the kernel, in the server firmware, in libvirt and added some features like a guest console. Libvirt made multi-architectural improvements when it reached version three; we’ve been eagerly keeping up with libvirt over the past releases, especially when Arm64 improvements came along. There are issues when it comes to libvirt being fully able to cope with Arm64 servers and hardware configurations. We’re looking into all the missing pieces necessary on the stack so that live migration will work across different vendors.

As with any first deployment, we found issues in Ceph and OpenStack when running the Linaro Developer Cloud in production since running tests on a test cloud is hardly equivalent to having a long standing cloud with VMs that survive host reboots and upgrades. Subsequently, we’ve had to improve maintainability and debuggability on Arm64. In our 18.06 release (we produce an Enterprise Reference Platform that gives interested stakeholders a preview of the latest packages), we added a few patches to the kernel that allow us to get crashdumps when things go wrong.

We’re actively using servers with processors from Cavium, HiSilicon, Qualcomm and others. We’re currently starting to work with the OpenStack-Helm and LOCI teams to see if we can deploy Kubernetes smoothly on Arm64.

If you are interested in running your projects on Arm64, get in touch with us!

About the author

Gema Gomez, technical lead of the SDI team at Linaro Ltd, joined the OpenStack community in 2014.

- Demystifying Confidential Containers with a Live Kata Containers Demo - July 13, 2023

- OpenInfra Summit Vancouver Recap: 50 things You Need to Know - June 16, 2023

- Congratulations to the 2023 Superuser Awards Winner: Bloomberg - June 13, 2023

)