When it comes to containers, you need to master the right moves.

There’s Kubernetes this way, that way — and then there’s the “skater way,” say Ryan Beisner and Marco Ceppi. Both have deployment experience on clouds and Kubes and “all sorts of fun things on different architectures,” Beisner on the OpenStack engineering team at Canonical and Ceppi currently at TheSilphRoad. Riffing off the classic 80s video game “Skate or Die!” the pair explored different ways to kick it, flip it and ride it home with OpenStack in their talk at the recent Summit.

Come see @ryanbeisner and I talk about about “K8S or DIE”: When, why how, or if you even should use Kubernetes. https://t.co/wJRN1kOe6b pic.twitter.com/NhkCJnd5Wj

— Marco Ceppi (@marcoceppi) May 10, 2017

Why Kubernetes?

Kubernetes is surprisingly — and shockingly — just software, Ceppi says. “It’s nothing special. It’s not a magic hat. It’s not a cape that you can wrap around your neck and fly off into the free world proclaiming, ‘DevOps is done!’”

Kubernetes is software and it’s actually from an architecture perspective since Kubernetes is a containers coordinator, “what you’re really getting is actually the abstraction of those machine resource- those VM primitives- those things where Kubernetes is running, you’re getting an abstraction for those for your Docker containers. And what it really amounts to is effectively giving you the abstraction of compute, networking, and storage for Docker-style containers,” Ceppi adds.

This sounds strikingly familiar to a couple other projects you’re probably familiar with. Kubernetes is a platform, providing that mechanism to run containers, where you say “I’m going to run them here. They’re going to be on this network. They’re going to have potentially this storage attached, and they’re going to be running on these classes of machines or these types of processors with these compute resources.”

Because Docker containers are, generally speaking, a small surface area (a single process running on a virtually immutable disk with some networking) it’s a platform that grows a lot of capabilities that were tougher in previous generations of computing, doing things like rollout and rollbacks of applications. “While absolutely it’s something you could do today on traditional VMs, but it took quite a lot of time to produce the tooling to get to that place,” Ceppi says.

In addition to that, you get things like load balancing, self healing, service discovery…Because it’s so small and small strongly defined as a surface area, it’s easy for platforms to start building these primitives where previously it was quite difficult.

“What’s gotten a lot of buzz around things like Kubernetes and these platforms is because they have these features took arguably a long time how to solve, and there are still some areas where they can improve with operating traditional-style VMs,” he says.

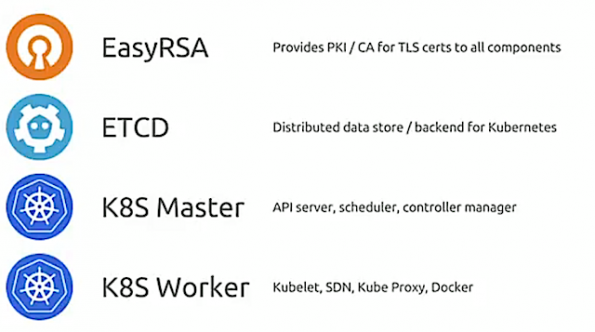

Kubernetes architecture 101

It breaks down to a few components, Ceppi notes. There’s Kubernetes core software itself, etcd (its data-backing store) and some form of SSL/TSL certificates. Those components are basically arranged in the slide below.

There’s a master control plane service an API server, that you or your clients or users talk to. There’s a scheduler that balances workloads and auto healing and auto scaling. Then there’s a controller manager to coordinate all of the different moving pieces inside a deployment. Finally, there are workers.

“This is where you’re actually running your workloads, and there you’re running a thing called “Kubelets.” You’re usually running a Kube proxy,” Ceppi explains. Your container runtime, Docker, Rocket, OCID, many others are supported now. There’s also some sort of networking SDN, and a CNI- container networking interface- compatible component, like Flannel, like Calico, Weave and a bunch of others as well.

That’s typically what an architecture Kubernetes deployment looks like. “Because a lot of this stuff is Dockerized/containerized, it lends itself to running in a very converged fashion,” Ceppi says. “A lot of times you’ll see with really just one machine, you can run a single Kubernetes’s cluster, but really with three machines, you can run a high availability cluster where you’re scheduling workloads. You’re also running the API control plane services, and the data store services.”

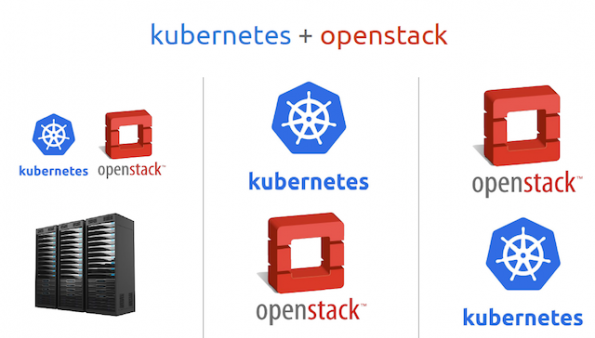

Kubernetes + OpenStack = 50-50 grind

Ceppi and Beisner outlined a few killer cases for K8 and OpenStack:

⁃ You have an existing OpenStack. You have a production cluster, perhaps you have a DevCloud, maybe you have both staging and production…Your tenants can certainly stand up Kubernetes and have individual clusters that they consume. “If I go to Amazon to deploy a Kubernetes cluster, I basically just do it on top of OpenStack, as if I had a private cloud,” Ceppi says.

⁃ You’ve got an existing OpenStack, you have some spare bare metal and you stand up your cluster of Kubernetes alongside OpenStack, Beisner says.

⁃ You are heavy into data science, love GPUs, you’re clamoring for more metal. Dockerizing on Kubernetes on bare metal probably provides access to virtualized hardware.

⁃ You’ve got metal. You’ve got Kubernetes sitting on that metal. That Kubernetes cluster may be delivering multiple applications for your company. One of those applications might be the OpenStack control plane or other OpenStack services. Again, maybe your tenants want their own Kubernetes cluster. Dockerizing the OpenStack control plane and other services, and distributing that and managing it with Kubernetes is “gaining traction,” Beisner says.

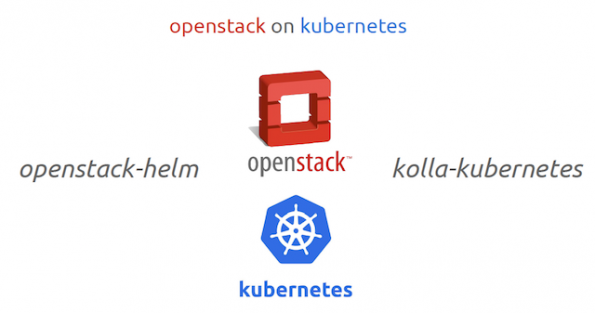

How to get there

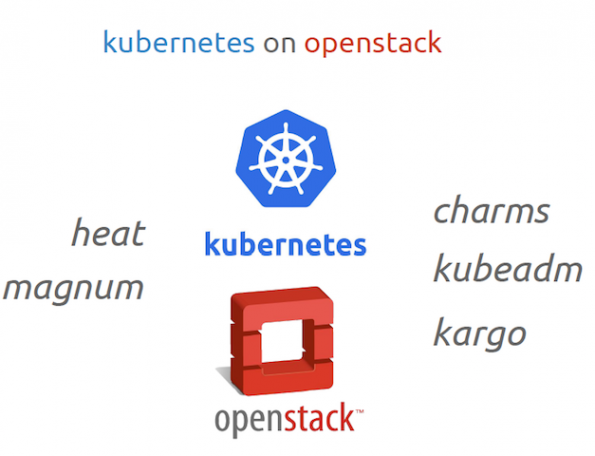

Ceppi and Beisner then outline the projects that can help you achieve get there – whether it’s you’re exploring or ready to go into production.

In your toolbox, they advise having Heat templates, and Charms, Kubeadm, and Kargo. “All of those speak the OpenStack API natively, so if you’re looking to turn up a Kubernetes cluster, those are kind of upstream Kubernetes ways of tackling that problem,” Ceppi says.

The state of stateful, stateless and the future

I was always very happy to say, “You know what? The future is containers,” Ceppi says. “But there’s a different story here now. It’s not Kubernetes versus OpenStack, but it’s really about architecture — whether it’s stateless or stateful.

‘Stateful’ is a pretty nebulous term. What does it actually mean to be something stateful? ’Stateless’ is pretty clear, but stateful is a really awkward term. There’s definitely a generation divide between software being built. [For example,] Cockroachdb was designed to be resilient out of the box. It was designed to do clustering, peering — it’s designed for cloud native architecture. An architecture where if one machine goes down, it’s expected to happen. So, while there’s a consistent state present across all those nodes, no one machine or VMm or Docker container has state that will, if it goes missing, will break the cluster. So, it’s a distributed stateful application and because of that, it actually survives quite well and thrives as we saw in the Interop challenge inside of a Dockerized containers/Kubernetes deployment.

Where we start to see the divide is not just all stateful applications as a general blanket statement that you can’t, or shouldn’t, run them inside Kubernetes. It’s about judging which application can I run in Kubernetes? Which application should I be running in Kubernetes? A lot of times there’s a good mix of things where developers want to go really quickly, and they have an application that suits that architecture. But you’ve also got database operators and you’ve general sys admins who want to handcraft VMs and that they manage those VMs. And that they do things to persist that data a infrastructure level- whether it’s snapshotting that data, whether it’s making sure it’s backed up and robust, or running multiple services like cron jobs and other things on that. I think that’s fine to sit in a VM — that’s where you’ll find the intersection of Kubernetes-infrastructure management.

“Because of that, in the next 5-6 years, we’ll see a nice heterogeneous deployment of things managing VMs and things managing Docker containers. Then who knows by then. We’ll hit server-less? We’ll probably hit quantum containers by then as well, so the whole world will change,” Ceppi says. “It will be an ever-evolving landscape.”

You can catch the 39-minute talk here and download the slides here.

Cover Photo // CC BY NC

- Demystifying Confidential Containers with a Live Kata Containers Demo - July 13, 2023

- OpenInfra Summit Vancouver Recap: 50 things You Need to Know - June 16, 2023

- Congratulations to the 2023 Superuser Awards Winner: Bloomberg - June 13, 2023

)